|

Ethics are important to consider in any field. Without them there is potential for people to do great harm to others. It is well-known that ethics in research has a messy history. From medicine to psychology to anthropology to information science, harm has been done by researchers when ethical guidelines have been less structured. Ethical guidelines help researchers to determine the risk of whether something will cause harm, and make decisions to mitigate that risk. The most difficult part of this, however, is that all research has at least a little bit of risk. Even the most benign research can involve risks to the participants or researchers. And so the processes used to ensure ethical research (e.g., ethical review boards, data management plans, data protection committees, etc.) are all important to making sure that researchers fully consider the risks of their research and have plans in place to mitigate them.

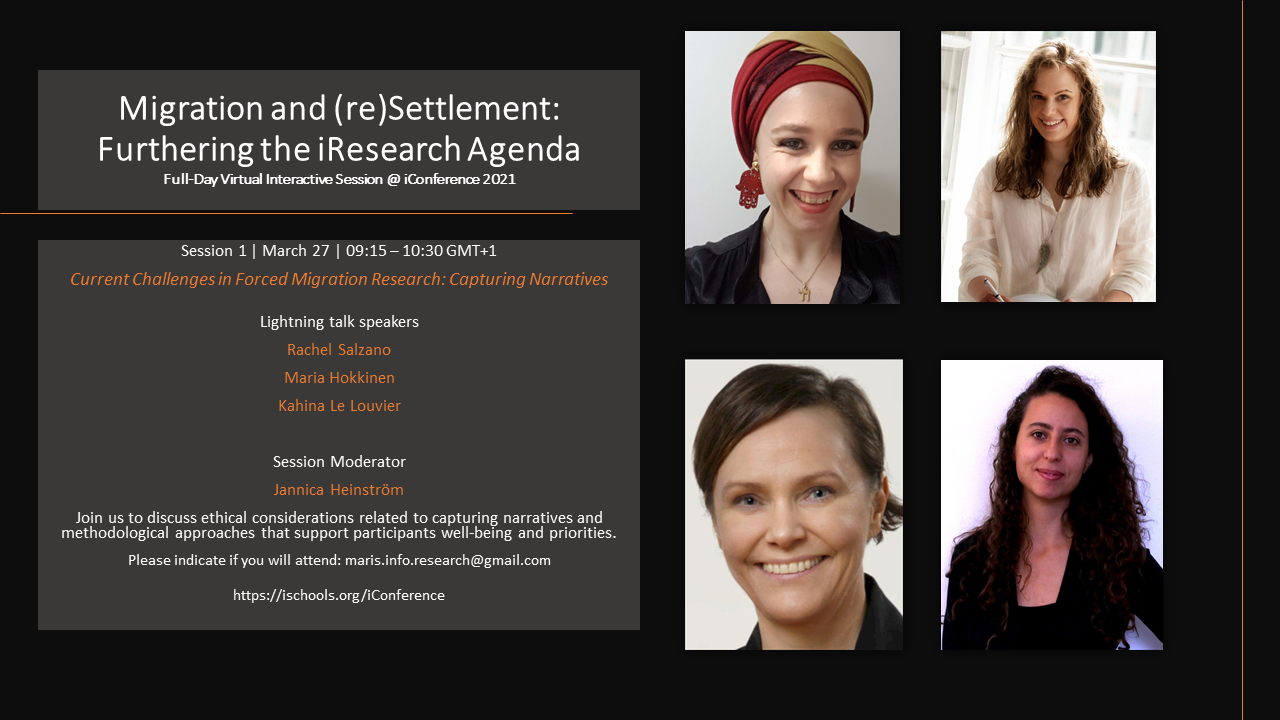

As I have been working through my own ethical application for my PhD, I have noticed that the question of what is most ethical can be complex. For example, much research is conducted digitally, especially during the past year, and so there is an emphasis on making sure that research data about human participants is protected. Data breaches happen, and if a participant's personal data is somehow set free, or deliberately used in a malicious manner, harm could come to the participant. As many of us are probably aware, organisations that provide access to digital products aren't always the most secure, and so data protection committees who review how a researcher plans to collect and store participant data are very concerned with the protection of that data (hence the name of the committee). The ethical question that comes into play, however, is whether it is more ethical to use a more secure system that potential participants do not know (and may not be comfortable with) or use a less secure system that potential participants do know (and are comfortable with). Does the abstract idea of protecting participant data outweigh participant comfort? If a participant is aware of the risks and still wishes to use a less secure system should researchers push them into using a system they aren't comfortable with? Hopefully your answer to that last question is 'no', but in that case what about the voices that have just been excluded from our work because we required them to use a system they were uncomfortable using? Past research, and much of current published research, is from a very specific perspective. Voices have been excluded for a long time and they continue to be excluded. Research is now opening up to new perspectives, but it is still dominated by the traditions of the past. If we deliberately exclude the voices of particular participants because of data protection are we strengthening the current status quo by ignoring voices that don't match themselves to our process of research? These are important points to consider when thinking about ethical research, and I invite you to join myself and colleagues at an interactive session at the iConference 2021 (conference registration required, indicate attendance by e-mailing [email protected]) as we mull over this and other questions regarding ethics and research on migration and (re)settlement. I and my colleagues will be presenting lightning talks (7 minutes or so) and then the floor will open for discussion amongst all attendees. I hope to see you there! (Or if you are unable to attend, discuss such concepts elsewhere).

0 Comments

|

A Second Blog Page?This is the part of the blog specifically about my PhD. It will include updates, musings, and advice. Archives

August 2022

Categories |

RSS Feed

RSS Feed